Arcana

Project Overview

Arcana is an AI-powered tarot companion that guides users through card readings via a conversational chat interface. The AI backend — responsible for the tarot knowledge and interpretation logic — was developed by the client's team. Our responsibility was the Flutter mobile client: a performant, polished chat experience on both iOS and Android.

The nature of the product created an immediate technical challenge. Chat sessions grow long. Users return daily, accumulate readings, and the message history can reach thousands of entries. At the same time, the AI streams its responses in real time — outputting formatted text that includes bold interpretations, italicized card names, headers, and bullet-point breakdowns. Building a chat screen that handles both of these gracefully — at 60 fps on budget hardware — was the core of our work.

Daily Card Draw

Streaming Chat Response

Card Spread Reading

Real-Time Streaming via SSE

The AI responses arrive as a stream of tokens, not a single payload. We implemented a Server-Sent Events (SSE) client in Flutter that opens a persistent HTTP connection to the backend and processes the token stream as it arrives. Each incoming chunk is appended to the active message in the chat, giving users the familiar "typing" effect — watching the interpretation appear word by word.

Handling SSE in Flutter required careful management of the connection lifecycle: reconnecting on network interruptions without losing partial content, buffering incomplete UTF-8 sequences at chunk boundaries, and ensuring the UI rebuilds only the affected message widget rather than the entire list on each token arrival.

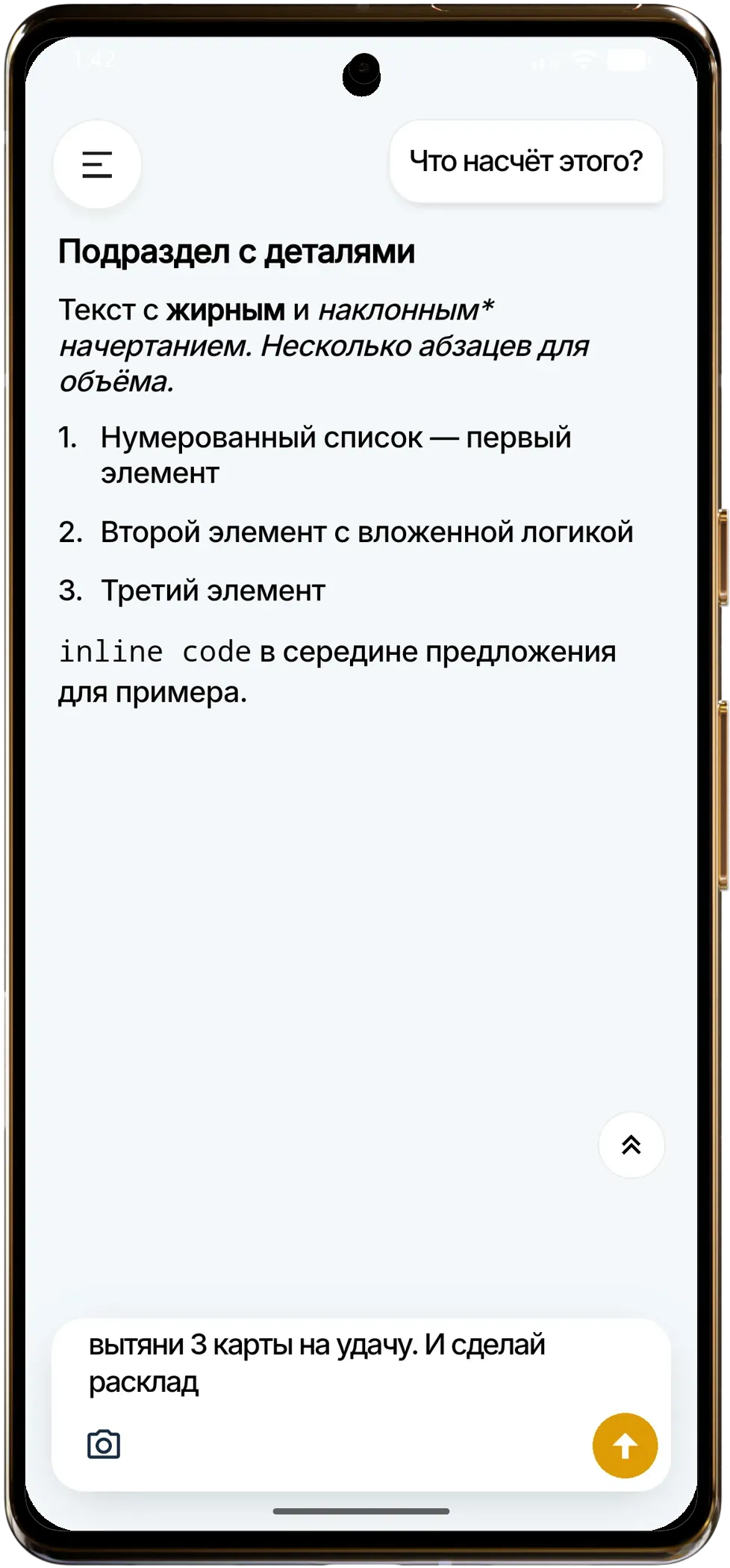

On-the-Fly Markdown Rendering

Because the AI formats its responses in Markdown — using bold for card names, italics for keywords, headers for spread sections, and lists for key points — the chat renderer needed to parse and display Markdown incrementally, updating in real time as new tokens stream in.

We built a custom incremental Markdown renderer that processes the growing string on each update without re-parsing the entire message from scratch. Partial tokens at the end of the current buffer (an unclosed ** or a half-written heading) are held in a pending state and rendered as plain text until the delimiter is resolved. This avoids flicker and layout jank while maintaining correct formatting as the response completes.

Performance Benchmarking on Low-End Devices

Long chat histories are a given for a daily-use app. We designed the system to handle 5,000+ messages without degradation, and we validated this with structured benchmarks on low-tier Android devices — phones representative of the budget segment where Flutter performance pressure is highest.

Our benchmark suite scrolled through chat lists of varying sizes (500, 2,000, and 5,000 messages) while simultaneously streaming an active SSE response, measuring frame times throughout. Initial builds showed frame drops during fast scrolling at the 2,000+ message mark as layout passes for Markdown-rendered bubbles became expensive.

We addressed this with several targeted optimizations:

Lazy layout caching: Rendered Markdown layouts are cached by message ID. Once a message bubble has been laid out, its dimensions and widget tree are reused on subsequent scroll passes rather than recomputed.

Sliver-based virtualization: The chat list uses a SliverList with a custom delegate that avoids building off-screen widgets. Only messages within or near the visible viewport are instantiated, keeping the widget tree shallow regardless of total message count.

Isolated stream rebuilds: The streaming message at the bottom of the list is managed independently from the historical list. SSE token updates trigger a targeted rebuild of only the active bubble widget, leaving the virtualized list completely untouched.

After these optimizations, frame time stayed consistently below 16 ms across all benchmark scenarios — including 5,000-message histories with an active stream — on the target low-tier devices.

Results

The final client delivers a chat experience that feels fluid and responsive at any history depth, on any device in the target range. Streaming responses render smoothly in real time with correct Markdown formatting throughout, and long-term users accumulating hundreds of sessions never encounter slowdowns. The performance foundation we established gives the product room to grow without revisiting the rendering architecture.

Project Details

- Date

- February 10, 2026

- Duration

- 3 Weeks

Interested in a similar project?

Let's discuss how we can help bring your vision to life.

Get in Touch